HPC Clusters - High-Performance Computing Clusters for High Demands

HPC clusters are typically found in technical and scientific environments such as universities, research institutions, and corporate research departments. Often the tasks performed there, such as weather forecasts or financial projections, are broken down into smaller subtasks in the HPC cluster and then distributed to the cluster nodes.

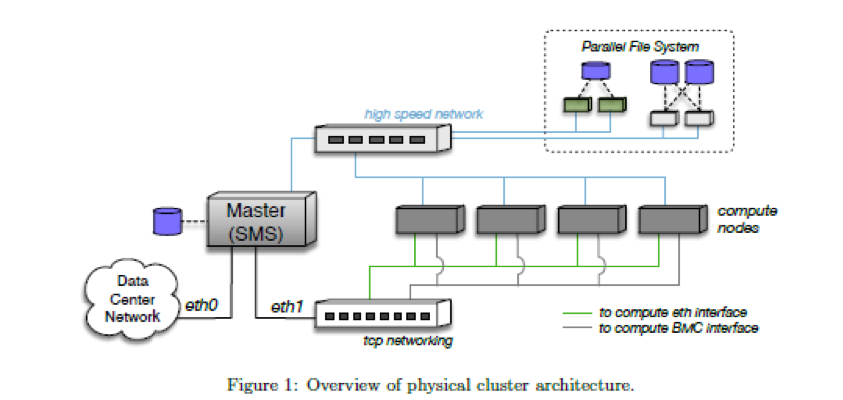

The cluster's internal network connection is important because the cluster nodes exchange many small pieces of information. These have to be transported from one cluster node to another in the fastest way possible. For this reason, the latency of the network must be kept to a minimum. Another important aspect is that the required and generated data can be read or written by all cluster nodes of an HPC cluster at the same time.

Here you'll find HPC Clusters

Do you need help?

Simply call us or use our inquiry form.

Structure of an HPC Cluster

- The cluster server, which is also called the master or frontend, manages the access and provides programmes and the home data area.

- The cluster nodes do the computations.

- A TCP network is used to exchange information in the HPC cluster.

- A high-performance network is required to enable data transmission with very low latency.

- The High-Performance Storage (Parallel File System) enables the simultaneous write access of all cluster nodes.

- The BMC Interface (IPMI Interface) is the access point for the administrator to manage the hardware.

All cluster nodes of an HPC cluster are always equipped with the same processor type of a selected manufacturer. Usually, different manufacturers and types are not combined in one HPC cluster. A mixed configuration of main memory and other resources is possible. This needs to be considered when configuring the job control software.

When are HPC clusters used?

HPC clusters are most effective if they are used for computations that can be subdivided into different subtasks. An HPC cluster can also handle a number of smaller tasks in parallel. A high-performance computing cluster is also able to make a single application available to several users at the same time in order to save costs and time by working simultaneously.

HAPPYWARE will be happy to build an HPC cluster for you with various configured cluster nodes, a high-speed network, and a parallel file system. For the HPC cluster management, we rely on the well-known OpenHPC solution to ensure effective and intuitive cluster management.

If you would like to learn more about possible application scenarios for HPC clusters, our HPC expert Jürgen Kabelitz will be happy to help you.

HPC Cluster Solutions from HAPPYWARE

Below we have compiled a number of possible HPC cluster configurations for you:

Frontend or Master Server

- 4U Cluster Server with 24 3.5'' drive bays

- SSD for the operating system

- Dual Port 10 Gb/s network adaptor

- FDR Infiniband adaptor

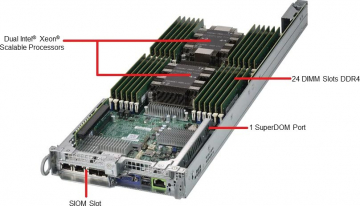

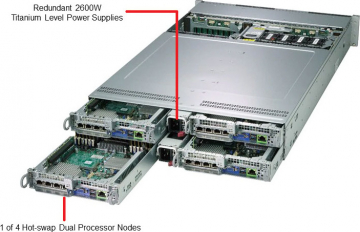

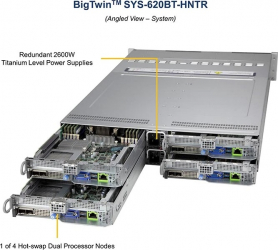

Cluster nodes

- 12 Cluster nodes with dual CPU and 64 GB RAM

- 12 Cluster nodes with dual CPU and 256 GB RAM

- 6 GPU computing systems, each with 4 Tesla V100 SXM2 and 512 GB RAM

- FDR Infiniband and 10 GB/s TCP network

High-performance storage

- 1 storage system with 32 x NF1 SSD with 16 TB capacity each

- 2 storage systems with 45 hot-swap bays each

- Network connection: 10 Gb/s TCP/IP and FDR Infiniband

HPC Cluster Management - with OpenHPC and xCAT

Managing HPC clusters and data necessitates powerful software. To cater for this we offer two proven solutions with OpenHPC and xCAT.

HPC Cluster with OpenHPC

OpenHPC enables basic HPC cluster management based on Linux and OpenSource software.

Scope of services

- Forwarding of system logs possible

- Nagios Monitoring & Ganglia Monitoring - Open source solution for infrastructures and scalable system monitoring for HPC clusters and grids

- ClusterShell - Event-based Python library for parallel execution of commands on the cluster

- Genders - Static cluster configuration database

- ConMan - Serial Console Management

- NHC - Node health check

- Developer software including Easy_Build, hwloc, spack, and Valgrind

- Compilers such as GNU Compiler, LLVM Compiler

- MPI Stacks

- Job control systems such as PBS Professional or Slurm

- Infiniband support & Omni-Path support for x86_64 architectures

- BeeGFS support for mounting BeeGFS file systems

- Lustre Client support for mounting Lustre file systems

- GEOPM Global Extensible Power Manager

- Support of INTEL Parallel Studio XE Software

- Support of local software with the Modules software

- Support of nodes with stateful or stateless configuration

Supported operating systems

- CentOS7.5

- SUSE Linux Enterprise Server 12 SP3

Supported hardware architectures

- x86_64

- aarch64

HPC Cluster with xCAT

xCAT is an "Extreme Cloud Administration Toolkit" and enables comprehensive HPC cluster management.

Suitable for the following applications

- Clouds

- Clusters

- High-performance clusters

- Grids

- Data centres

- Render farms

- Online Gaming Infrastructure

- Or any other system configuration that is possible.

Scope of services

- Detection of servers in the network

- Running remote system management

- Provisioning of operating systems on physical or virtual servers

- Diskful (stateful) or diskless (stateless) installation

- Installation and configuration of user software

- Parallel system management

- Cloud integration

Supported operating systems

- RHEL

- SLES

- Ubuntu

- Debian

- CentOS

- Fedora

- Scientific Linux

- Oracle Linux

- Windows

- Esxi

- And many more

Supported hardware architectures

- IBM Power

- IBM Power LE

- x86_64

Supported virtualisation infrastructure

- IBM PowerKVM

- IBM zVM

- ESXI

- XEN

Performance values for potential processors

The performance values used were those of SPEC.ORG. Only the values for SPECrate 2017 Integer and SPECrate 2017 Floating Point will be compared:

| Manufacturer | Model | Processor | Clockrate | # CPUs | # cores | # Threads | Base Integer | Peak Integer | Base Floating-point | Peak Floating-point |

| Gigabyte | R181-791 | AMD EPYC 7601 | 2,2 GHz | 2 | 64 | 128 | 281 | 309 | 265 | 275 |

| Supermicro | 6029U-TR4 | Xeon Silver 4110 | 2,1 GHz | 2 | 16 | 32 | 74,1 | 78,8 | 87,2 | 84,8 |

| Supermicro | 6029U-TR4 | Xeon Gold 5120 | 2.2 GHz | 2 | 28 | 56 | 146 | 137 | 143 | 140 |

| Supermicro | 6029U-TR4 | Xeon Gold 6140 | 2,3 GHz | 2 | 36 | 72 | 203 | 192 | 186 | 183 |

NVIDIA Tesla V100 SXM2 64Bit 7.8 TFlops; 32Bit 15.7 TFlops; 125 TFlops for tensor operations.

HPC Clusters and more from HAPPYWARE - Your partner for powerful cluster solutions

We are your specialist for individually configured and high-performance clusters — whether it‘s GPU clusters, HPC clusters, or other setups involved. We would be happy to build a system to meet your company's needs at a competitive price.

For scientific and educational organisations, we offer special discounts for research and teaching. Please contact us to learn more about these offers.

If you would like to know more about the equipment of our HPC clusters or you need an individually designed cluster solution, please contact our cluster specialist Jürgen Kabelitz. He will be happy to help.